July

5

2009

5

Development of the Mars Flyer iPhone App

My app Mars Flyer just hit the iTunes store, and I decided it would be a great time to talk about the development project behind it. I have two motivations for doing this; one is to discuss some of the more interesting aspects of the app's development, and the other is to show the kind of work and time that goes into a complex app. All too often, App Store customers dismiss an app or bitch about its price, when it's clear they have no idea what goes into the development effort.

The idea for this app came from a Mars airplane project I worked on a few years ago. It makes a lot of sense to use robotic aerial platforms to explore other planets in our solar system, and in the case of Mars, a rocket powered airplane turns out to be an optimum configuration. With this type of mission, we could bridge the gap between small "local" scale measurements of landers and rovers and large "global" scale measurements of orbiters. By covering hundreds of kilometers in a short amount of time, an airplane could obtain a whole new class of atmospheric and geological data from Mars. Just think about the way airplanes have changed the way we live on Earth, and you can start to understand how they could revolutionize our exploration of other planets.

So the Mars airplane brings a good starting theme to the table. Then what? It's all to easy to build an app around a theme that doesn't really excite anyone. In this case, I wanted to focus on exploration and look at Mars in detail. It really is a fascinating and beautiful planet with all sorts of cool features. Imagine if you could explore Mars using an iPhone (or iPod Touch). Imagine if you could have a whole planet's worth of high-resolution color imagery in your pocket. Imagine if you could fly anywhere on that planet and look around. When I considered the possibilities, I started to get pretty excited. It's a novelty for sure, but novelty apps sell pretty well. And this one would be based on some real science and compelling visualization, not just making fart noises.

Right away, I knew I had to use high resolution terrain data. Most OpenGL games have to approximate terrain in order to make fast rendering practical and to handle things like 3D lighting. As a result, the terrain can look blocky, planar, and pixellated, and it ends up being unrealistic. It's ironic that the quest for 3D actually take some realism away from things like terrain, but that kind of compromise is acceptable for a game where terrain is not the focus. On the other hand, this app is not a game, and the terrain is not merely a background. Terrain would have to be the focus of this app.

That steered me towards public-domain Mars imagery available from the USGS. The data originates from various NASA orbiter missions over the years, but the USGS has taken the data and really done a great job making it accessible. The only question was how to extend that to an iPhone app in a usable way.

I considered many possibilities in the beginning, knowing that it would take a lot of testing, optimization, and compromises to work on the iPhone. I could setup some canned flights over Mars, where the app dictated the terrain data, but that wasn't too exciting (plus I would have to spend a lot of time manually cataloging sites of interest and deciding which ones to include in the app). I could allow the user to fly anywhere, adjusting course in real time, but that wasn't practical; there is no way to load an entire planet's worth of high resolution terrain data without all sorts of overhead issues and interruptions for buffering. Finally, I settled on a strategy where the user picks an arbitrary flight route (by setting start and end points along a fixed heading) and then the airplane flies that pre-defined route. On paper, that looked like the best approach.

I was able to locate a Mercator topographic map of Mars that could serve as a front end for the app, allowing the user to lay out a flight path. And the USGS provides access to photographic Mars Viking imagery rendered with the same Mercator projection, which could serve as the app's terrain. From a user-specified flight route on the map, I could technically find the subset of USGS imagery needed by the iPhone to render the terrain along that route. I'll call this subset the "stencil".

With this approach in my head, I skipped over the map and flight path selection to start. I knew that would be a sub-project of its own, and first I wanted to flush out the stencil approach on the actual terrain. So I started by picking two latitude and longitude test points defining a flight path and went right to work.

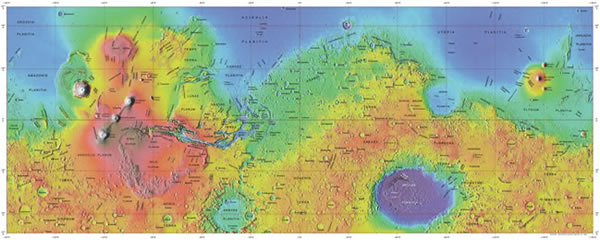

When you get high resolution Mars terrain imagery of the whole planet from the USGS, it comes as a really BIG image. At the best resolution, the image was about 10,000 pixels high by about 23,000 pixels wide and over 700MB in size. Here's a 2.6% scale version of that image, showing Mars over ±60° latitude and ±180° longitude using Mercator projection:

At full size, an image that big is barely practical to work with on a desktop computer, never mind an iPhone. So I knew I needed to break it up into thousands of small tiles. The iPhone app would store all those tiles internally as resources, which leads to challenge #1: getting them into an efficient size and format.

When considering tile size, there are many factors to weigh. While the rendering scheme used by PowerVR graphics processors in the iPhone and iPod Touch is smart enough to skip hardware rendering for pixels that fall outside the screen area (say, for tiles where some portion spills offscreen), you still need to think about software OpenGL calls and memory requirements. Smaller tiles are a more efficient way to fill an arbitrarily oriented/shaped scene since they have an effectively higher working resolution, but they require more OpenGL drawing calls, including binding to each tile texture before drawing. Large tiles can be wasteful when painting a scene, but require fewer drawing calls. Texture mapping can bridge the gap between these two approaches, but brings other pros and cons along. In the end, some very basic geometry considerations, combined with trial and error experimentation, led me to use 128x128 tiles in PNG-8 format. Here's an example tile at full resolution as used in the iPhone app:

But even that wasn't simple. You see, if you convert the imagery to PNG-8 format before splitting into tiles (which is the natural time to do it as a single operation), you are allocating an 8-bit color-space's available colors (256 of them) over the entire big image above. Since there were more than 256 colors in the original image, that means some colors were left out of the 8-bit approximation and the overall effect was to flatten the image color slightly (not bad, but definitely noticeable in some areas of the planet). On the other hand, if you convert to PNG-8 after tiling, each small tile has it's own 256 color palette, which is more than enough colors to cover the content of the tile in this case (plus, any unused colors get thrown out to save space). This is a rather subtle difference when you consider the order of image processing operations, but an important one.

In the end, the full terrain image above was broken into 3780 tiles, totaling about 45MB on disk. I could have doubled resolution of the terrain, bringing the totals to 15120 tiles and about 180MB of file storage, but that turned out to be impractical with the current state of performance and storage that I was comfortable with on the iPhone. I plan to revisit this decision in the future, possibly making the larger data set a free upgrade or in-app purchase option for people with an iPhone 3GS, which can better handle the performance and storage requirements.

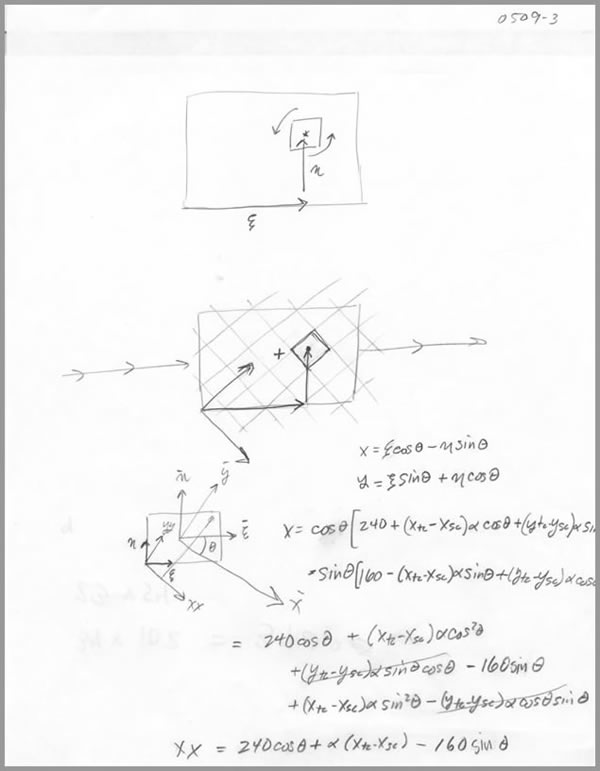

Challenge #2 is to only load into memory those tiles needed for the current flight stencil. Going into this, I had some rough guidelines for OpenGL ES performance on the iPhone based on some of my earlier apps and games. I knew it would be feasible to store a few hundred OpenGL textures in memory at a given time, and it would be possible to render 40-100 textures per frame at the 50-60Hz frame rate I like to shoot for. The trick was to choose a stencil that would minimize both storage and rendering requirements. For the former, that meant carefully choosing and allocating tiles that were needed for the flight. For the latter, that meant only drawing tiles that were needed on the screen at a given point along the flight. Here are the beginnings of my stencil model:

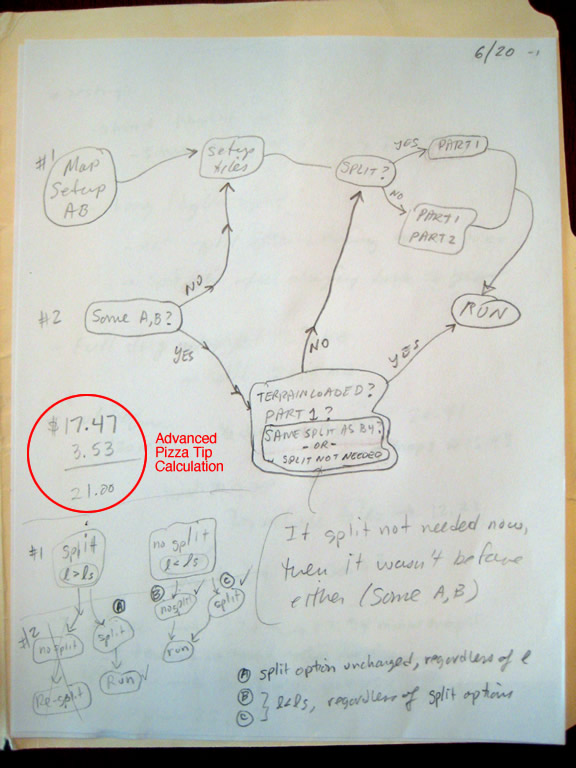

That reminds me -- I ended up with quite a stack of notes for this project, about an inch thick. Normally I think about something, code it, and then throw away whatever sticky notes and napkins I doodled on during the process. During this project, it became obvious pretty quick that I needed good notes I could refer to. Towards the end of the project, I was still going back to some of the very earliest pages in that collection, so it was a good idea.

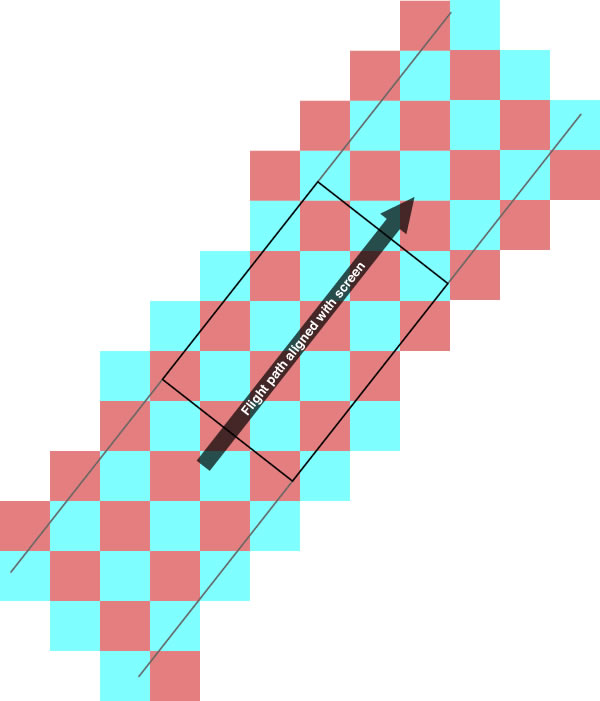

Anyhow, the stencil boils down to very simple geometry; basically, deciding how square tiles (situated in a rectangular coordinate system aligned with the terrain imagery) lie within the screen boundaries for some flight path along an arbitrary heading, and then later, what subset of those tiles show up on a screen at some point along the flight. Graphically, it looks like this:

So, in a preprocessing step, I determine all the tiles needed for the entire flight path, including a U-turn at each end. A simple distance formula could be used for something like this, to find tiles with centers that are within a certain radius of the flight path centerline, but that ends up being wasteful if blindly applied with a fixed radius (perhaps related to screen dimension). I was able to fine tune the stencil tile selection a bit further by taking the flight heading into account and transforming coordinates into a frame aligned with the heading, where simple inequalities could be used to decide if a tile was needed or not. This cuts down on the number of tile textures allocated and saves a fair bit of memory. Then, while rendering the flight later on, a simlar method is used to ensure that only those textures with some portion falling within the screen bounds are drawn for each frame. This minimizes the number of OpenGL drawing calls.

There were a zillion other small issues associated with rendering the flight path, but nothing of note; just the kinds of details that go along with every scrolling OpenGL animation. I drew the airplane of course, with a rocket exhaust plume and ailerons that key into the accelerometer, letting the user tilt the device to control speed and position (within the bounds of the pre-determined flight path). I added a compass overlay to show the heading, and a small overview route to indicate progress along the flight path. In the end, performance was great and the scene looks pretty nice:

Of course, that was running on hardwired start/end points of a flight route. The next big job was to let the user specify those points in an efficient manner. I found a great topographic Mercator map from the USGS that would fit the bill almost perfectly, shown here in reduced size:

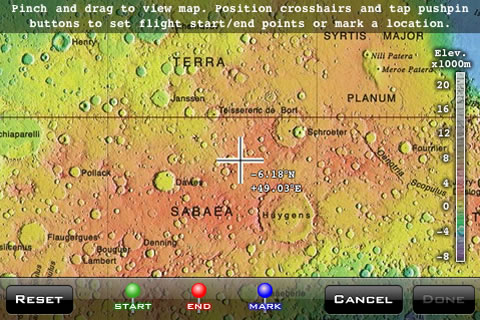

This also had to be tiled to draw efficiently in OpenGL, but it was much simpler to work out in this case. I was able to pull the map into the app and set up a basic "pinch and drag" operation to zoom and move around. Now it was a matter of locating points and defining a route. I liked the idea of using pushpins a la the iPhone's built in Maps app. My initial setup let the user drag pushpins onto the screen and move them around with a finger. This was pretty cool, but it became obvious that it was inefficient when I wanted to drag pushpins over long distances or fine tune their position. So I punted that cool idea and simply used crosshairs fixed to the middle of the screen to act as a target:

Tapping any of the pushpin buttons places a pushpin at the crosshairs, leaving dragging motions free to handle movement of the map alone. This ends up working out great. Simple usually beats cool every time. I used a green pushpin for the route start, red for the end, and blue to mark locations (sort of like bookmarks) for easy reference in the future. With end points specified, the flight path is shown:

So now I have the route specified in pixel coordinates on the map. To get that to corresponding coordinates on the terrain tiles takes a couple steps. Horizontally, the map and the terrain both have a linear scale from -180° to +180° longitude, so it's easy to convert along that axis just using proportionality (based on relative sizes of the map and the terrain tile set). Vertically, in the latitude direction, both use a nonlinear scale associated with the Mercator projection. This progressively enlarges the size of true terrain as you move away from equator (on a real globe, stuff gets smaller for a fixed angular arc, so flattening it out onto a rectangular sheet enlarges it).

In the latitude direction, I had to convert pixel position on the map to radians, and run that through a Mercator formula twice (once forward, once in reverse) to get a corresponding pixel position on the terrain tile collection. To allow for screen coverage when flying along the top and bottom edges of the map, the tile collection actually had to extend farther from the equator than the map, requiring two different Mercator formulas. But in the end, I learned a whole lot about map projections, which was really interesting.

Speaking of screen coverage, I also ran into an issue when flying along the right or left edges of the map, near the ±180° meridians. Of course the Mercator map and terrain imagery stop at those boundaries, but in real life you'd just see the other side of the meridian (ie, right and left edges of the map wrap around to touch each other in reality). There are a number of ways to handle this, the most primitive being to pad the left and right edges of the terrain imagery with more tiles copied from the opposite side. But it required about 4 layers of tiles to account for the various situations where a flight could run along or make a u-turn at each boundary meridian, and adding 8 layers of tiles to an already large data set doesn't make sense.

So, I used a "ghost cell" (better called "ghost tile" in this case) technique popular in numerical simulations on structured grids (part of my day job). For all practical purposes, the code thinks there are tiles on the other side of the boundary meridians, but the data actually just points to what's already in the main tile set, grabbing imagery from the opposite edge of the map in reverse order. It's what we'd call a periodic boundary condition in a numerical simulation, but it really makes sense here since we're dealing with actual cyclic angle scales.

The entire process I discussed to this point took about 120 hours of labor, and this was really just to rough out the working aspects of the app so that I could see it in action. I charge $120/hour when I do iPhone development work, so it would have cost a client about $14K to get to this point. But the underpinnings of this app were copied from my Mission 22 game, which provided the very basic framework for a 2D scrolling OpenGL animation with physics, sound, accelerometer inputs and smoothing, various interface elements, user preferences, and so on. Leveraging Mission 22's basic shell tapped into another 15-20 hours and around $2000 worth of labor. So all said, we're at about 140 hours and $16K at this point.

This is when I like to start living with an app as a user, and let other people (in this case my wife) give it a try. After working for weeks on an app, mostly from 7pm-1am, I become a bit too focused on the late night developer's perspective. That was especially true in this case, with all the math and geometry I had to slog though. So it was time to take a break.

Watching someone else use your app for the first time is a gold mine of information. If it's your wife and someone who you can speak frankly with, even better. You might not hear what you want to hear, but it's exactly what's needed to break weeks of developer focus and get a fresh perspective. My wife had loads of critique, ranging from "what the hell is this for?" to "I don't see the point of this" to "this background music makes me want to stab pencils in my ears". I had a full sheet of notes after watching her use the app that first time.

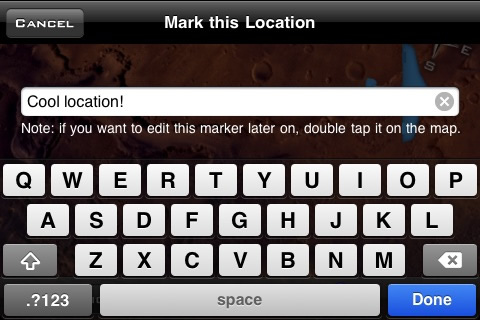

Many of her comments could be answered with a simple explanation. But that's a red flag; the developer shouldn't have to explain anything to a typical user. I don't even want to have to explain stuff on my website or in documentation if I can help it. It's the old "keep it simple, stupid" (KISS) mantra. So I set about tweaking the app to make things more obvious and more logical. That ranged from subtle color and wording changes here and there to small notes and suggestions throughout the app. For instance, when a user places a blue location marker for the first time, I put a small note on the popup keyboard telling them how to edit it in the future:

And so on. I implemented about two dozen small changes, which led to a few others. It really improved usability of the app quite a bit. Don't underestimate the value of good honest review.

Now, the comment about the background music was a mixed blessing. After my revisions, Mars Flyer was ready to be submitted to the App Store, and would have run on any OS from 2.0 onward. I had rung up about 180 hours of labor for a total of nearly $22K in developer time at that point. I figured that implementing access to the iPod music library would probably require a few more days time, and I knew it would require users to have OS 3.0 installed if they wanted to use the feature, potentially limiting my market a little (iPod Touch users are slow to upgrade to new OS releases, as are some iPhone users). But after thinking about it for a bit, I decided to put my final candidate build aside and take a shot at the incorporating the music feature in my initial release. It's important to make a good first impression in the App Store, and sometimes that means accelerating development of potential 0.1 upgrades and features so that they make it into the 1.0 release.

I started out with a simple implementation, using one of Apple's developer examples to show how to tap into the music library (this is a new feature in the 3.0 SDK). I went into this thinking that the feature would appeal to people like my wife who didn't want to hear a canned background track. Boy was I totally missing the boat. The inclusion of a custom music soundtrack completely changed the app. The first time I listened to a playlist of songs from Wilco's melancholy "Sky Blue Sky" album while flying over the Valles Marineris area of Mars, I knew this app was destined to have a custom soundtrack. With music accompanying the rich visual experience, the app transcended far beyond what I had envisioned, and beyond just a simple Mars exploration tool.

True to any serious project I take on, high points like this always push you back down into the depths of reality. I soon discovered that implementing iPod library access brought with it many obstacles, leading to another 60 hours ($7200) of developer time.

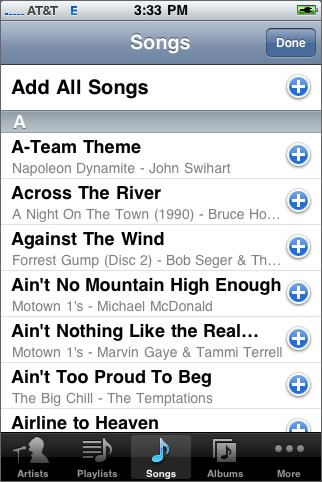

The first problem was trying to implement Apple's MPMediaPickerController into my landscape app. This is the front end to the iPod library, allowing you to browse through songs and select them:

I'll make a really long story really short by noting that this little bastard is hardwired for portrait use. I could get it into various stages of landscape, but when the orientation was rotated correctly, the touch inputs and drags weren't. If I could get the sizing right, then the orientation was wrong. And so on. I even cashed in one of my Apple developer tech support incidents looking for a solution (they confirmed my conclusion about the portrait limitation).

I attempted to roll my own landscape media picker interface, but it became obvious that this would be a daunting task -- a project of its own really. And when I made a little progress, I found that manually querying the media library was slow. This was quickly becoming a dead end.

So I weighed the options and decided to file a feature request (it's like a real upbeat bug report with lots of ass kissing) with Apple for a landscape MPMediaPickerController in a future OS release. In the meantime, I cue users to rotate their device to portrait when they want to add songs to or change the playlist within Mars Flyer. It's not a solution I am 100% happy with, but I think it was the best choice in this case.

OK, so the music's playing, we're flying, and things are groovy. Only, this app that was running really well in OS 2.2.1 is suddenly getting all sorts of memory warnings from the system. I do have the app respond to memory warnings and release any unnecessary data. For instance, when flying over terrain, the map tiles can be ditched. When using the map, the terrain tiles can be ditched. And so on. But this wasn't enough under OS 3.0.

Two factors were at play here. One was a stubborn refusal of Safari, running in the background, to release its memory. There were cases when my app would get a memory warning right after launch, before it even allocated data, while Safari was sitting on a 20MB nest egg in the background! Under 2.2.1, I recall Safari being a bit more cooperative when system memory got low. It seems to be more of a crap shoot under 3.0. When Safari hangs on to 15-20MB, it puts tremendous pressure on the frontmost (active) app.

The other factor was that great new music feature I loved so much. Unfortunately, playing music (which operates in a background thread separate from the app) chews up its own 5-20MB of memory. So there's another process running on the device that is competing with the active app. In some cases, the music process could actually use up more memory than my app!

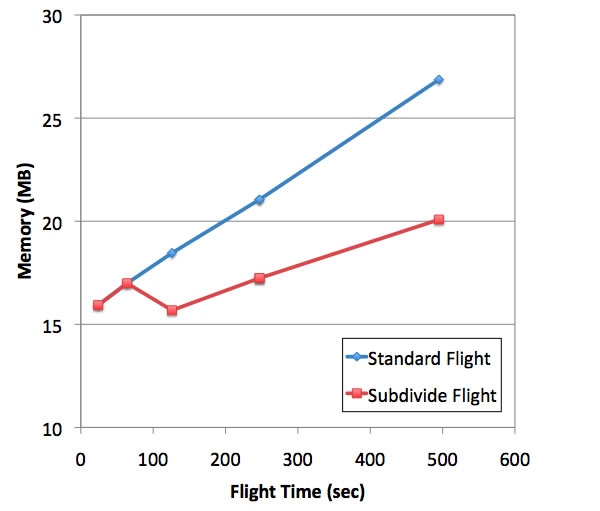

This all led to several days of what I call "code hardening", where I made Mars Flyer a lot more robust and flexible in the face of sparse memory resources. Under optimum conditions with good memory availability, the app can smoothly allocate and use up to about 27MB of memory. When memory is tight, the app can be more frugal and keep usage down in the 15-20MB range. One way I do this is by splitting long routes (those longer than 1/4 of the map diagonal) into two segments, with terrain tile buffering at the halfway point:

This required re-thinking the way I fly routes that already had start (A) and end (B) points defined, to avoid repeating tile stencil setup if possible:

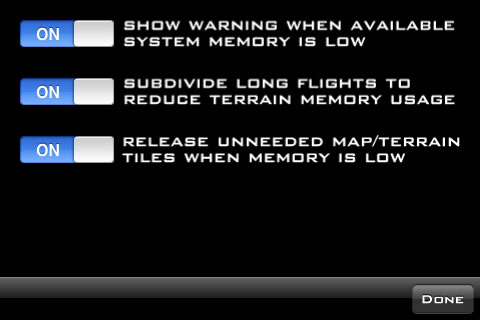

I made some of the memory management options available for user tweaking:

Interestingly, memory is a non-issue on the new iPhone 3GS, because it contains double the RAM of earlier devices -- now 256MB. That effectively lets Safari and the background music process chew up memory without placing pressure on the frontmost app. Had I been developing on an iPhone 3GS all along, I may never have bumped into the memory issue at all. Which brings up a pickle: we'd better hope developers still continue targeting and testing their apps on older devices like iPod Touches, the iPhone 3G, and my 1st generation iPhone. If they get comfortable with the performance and memory capabilities of the 3GS, it spells trouble for users of older devices. Though I plan to get a 3GS for my own use, I hope to keep my older iPhone around specifically for development. It's now become a very relevant performance/memory baseline to test against.

Speaking of the 3GS, it runs Mars Flyer rather nicely. Through careful coding, the app will run at the intended 50-60Hz OpenGL frame rate on any device. But on the 3GS, animations run with less CPU overhead, as frame to frame calculations for tile and airplane positioning go more quickly. The biggest impact of the 3GS is noticed when first setting up a new route, when the app computes the tile stencil along the flight path and allocates OpenGL textures. The iPhone 3GS is easily 2-4 times faster in this regard. It makes a very noticeable reduction in the amount of time between setting up a long route and flying it. So that's pretty cool.

In the end, it required about 240 man-hours of developer time to take Mars Flyer from start to finish. That would work out to about 30 eight-hour days, or 6 work weeks if this was a typical full time job for one person. If doing this work for a client, it would have added up to just under $29K. The last thing I did for Mars Flyer was hire a pro web designer to put together a good page for this app. I really felt Mars Flyer deserved a good web presence, and I just don't have the artistic talent or design sense to do it myself. Throw that last task onto the bill, and we come out at a nice even $30K.

I decided to price the app at $3.99, taking many factors into account. One was pricing Mars Flyer relative to my whole app lineup, which includes everything from a free deal tracker to simple $0.99 games to a complex $8.99 vehicle performance computer. I think it's important to look at pricing this way, as it puts everything into perspective. I established prices for my first 2-3 apps in the early days of the App Store when pricing was based on sanity, and I am trying to stick with the same simple approach now: I pick a price that I believe is fair and reasonable.

I have no doubt that some potential customers will bitch about the $3.99 price, but these are the same folks that would probably bitch about a $2.99 or $1.99 price. I don't want those customers, because this app is not about price. I want customers who are interested in exploring Mars and listening to their music in a cool and different way, and who can appreciate the work and complexity that goes into making that happen. As I've seen during demos over the last couple weeks, people who get really excited about Mars Flyer don't even ask about the price. That's my target market, where the app sells itself.